The hidden world of GPT-5 behavior

OpenAI explores the underlying causes and fixes for personality-driven quirks in GPT-5. This research provides insight into model behavior alignment and the technical challenges of scaling next-gen LLMs.

Le meilleur de l'écosystème IA et MCP, sélectionné chaque jour.

April 2026 marked a decisive transition from AI as a conversational interface to AI as an autonomous operating layer. The industry moved beyond simple "chat" toward agents with genuine architectural rigor, long-term memory, and the ability to interact directly with hardware and production systems.

The most significant trend this month was the professionalization of agent architecture. Anthropic explicitly detailed the decoupling of the "brain" (reasoning) from the "hands" (execution), a move designed to improve observability and scaling in managed agents. Parallelly, the discourse shifted toward the "harness"—the runtime environment that dictates how an agent retains and retrieves context. LangChain and Anthropic both pushed for more transparent, developer-controlled memory layers to avoid the pitfalls of proprietary, closed-API black boxes.

The release of GPT-5.5 and DeepSeek-V4 underscored the ongoing battle for reasoning depth and context efficiency. While GPT-5.5 pushed the boundaries of coding speed and research capabilities, DeepSeek-V4’s million-token context window provides the necessary "working memory" for agents to handle massive project contexts without losing retrieval accuracy. This was complemented by the introduction of native memory for Claude Managed Agents, shifting the burden of state management from the developer to the platform.

We saw agents move from high-level scripting to low-level system optimization. The standout example was Cursor’s multi-agent system optimizing CUDA kernels, achieving a 38% speedup on NVIDIA Blackwell GPUs. This proves that agentic workflows are now capable of mastering the hardware-software interface. Furthermore, the expansion of the Model Context Protocol (MCP) and the introduction of Symphony by OpenAI signal a move toward standardized orchestration, turning issue trackers and file systems into autonomous agent systems.

The boundary of AI shifted toward the edge. Google’s Gemma 4 and NVIDIA’s VLA (Vision-Language-Action) demos on Jetson Orin Nano prove that frontier-level multimodal intelligence can now reside on-device, enabling real-time robotic control and local analysis without cloud latency.

Key developments at a glance:

OpenAI explores the underlying causes and fixes for personality-driven quirks in GPT-5. This research provides insight into model behavior alignment and the technical challenges of scaling next-gen LLMs.

An analysis of how personality-driven quirks and 'goblin outputs' emerged in GPT-5 behavior. It details the timeline, root causes, and the fixes implemented to stabilize model personality.

Evaluating AI models is becoming a critical compute bottleneck as complexity increases. This shift highlights the need for more efficient evaluation frameworks to prevent a slowdown in model iteration.

Hugging Face integrates DeepInfra as an inference provider, expanding the accessibility of high-performance model hosting. This move strengthens the open-source AI ecosystem by lowering the barrier to deploying large-scale models.

NVIDIA releases Nemotron 3 Nano Omni, a multimodal model designed for long-context processing of audio, video, and documents. This enables more capable agents for complex multimodal analysis and retrieval.

Google and Kaggle are launching a 5-day AI Agents intensive course focused on 'vibe coding'. This is a practical resource for developers looking to build and deploy agentic workflows.

OpenAI introduces Symphony, an open-source specification for Codex orchestration. It enables the transformation of issue trackers into autonomous agent systems to reduce developer context switching.

DeepSeek-V4 introduces a massive million-token context window optimized for agentic workflows. This enables agents to maintain significantly larger project contexts and long-term memory without losing retrieval accuracy.

OpenAI has released GPT-5.5, featuring significant improvements in speed and reasoning capabilities. The model is specifically optimized for complex coding, deep research, and multi-tool data analysis.

OpenAI introduces a guide on Codex plugins and skills for automating repeatable workflows and tool integration. This provides a framework for developers to build more reliable, data-driven agentic task completion.

OpenAI introduces Codex, a tool designed to automate complex tasks by connecting various tools and producing concrete outputs like documentation and dashboards. It moves beyond simple chat to a more agentic, output-oriented workflow.

Anthropic introduces built-in memory for Claude Managed Agents, enabling them to learn across tasks and sessions. Developers can now build persistent agents without managing their own memory infrastructure.

Detailed guide on deploying production-grade MCP integrations, covering server design, OAuth via CIMD, and context-efficient client patterns. It clarifies the strategic balance between MCP, direct API calls, and CLIs for connecting agents to core systems.

Google introduces Deep Research and Deep Research Max, a new generation of autonomous research agents designed to handle complex information retrieval and synthesis.

Anthropic explores the architectural decoupling of agent reasoning (the brain) from execution (the hands) to enable better scaling and reliability in managed agents. This approach allows for more robust control and observability when deploying agents at scale.

Claude Code now supports routines, allowing developers to define repeatable AI workflows for tasks like backlog grooming and PR reviews. This brings a level of automation to the developer inner loop.

Cursor demonstrated a multi-agent system that autonomously optimized 235 CUDA kernels for NVIDIA Blackwell 200 GPUs. The approach achieved a 38% geomean speedup over baselines in just three weeks, showcasing the power of agentic optimization for low-level performance.

Google released Gemma 4 — their most capable open model family yet, with multimodal understanding and strong performance across reasoning, coding, and instruction following tasks. Designed to run on-device and at edge scale, Gemma 4 closes the gap with frontier closed models while remaining fully open-weight. A significant update to the most widely-used Google open model series.

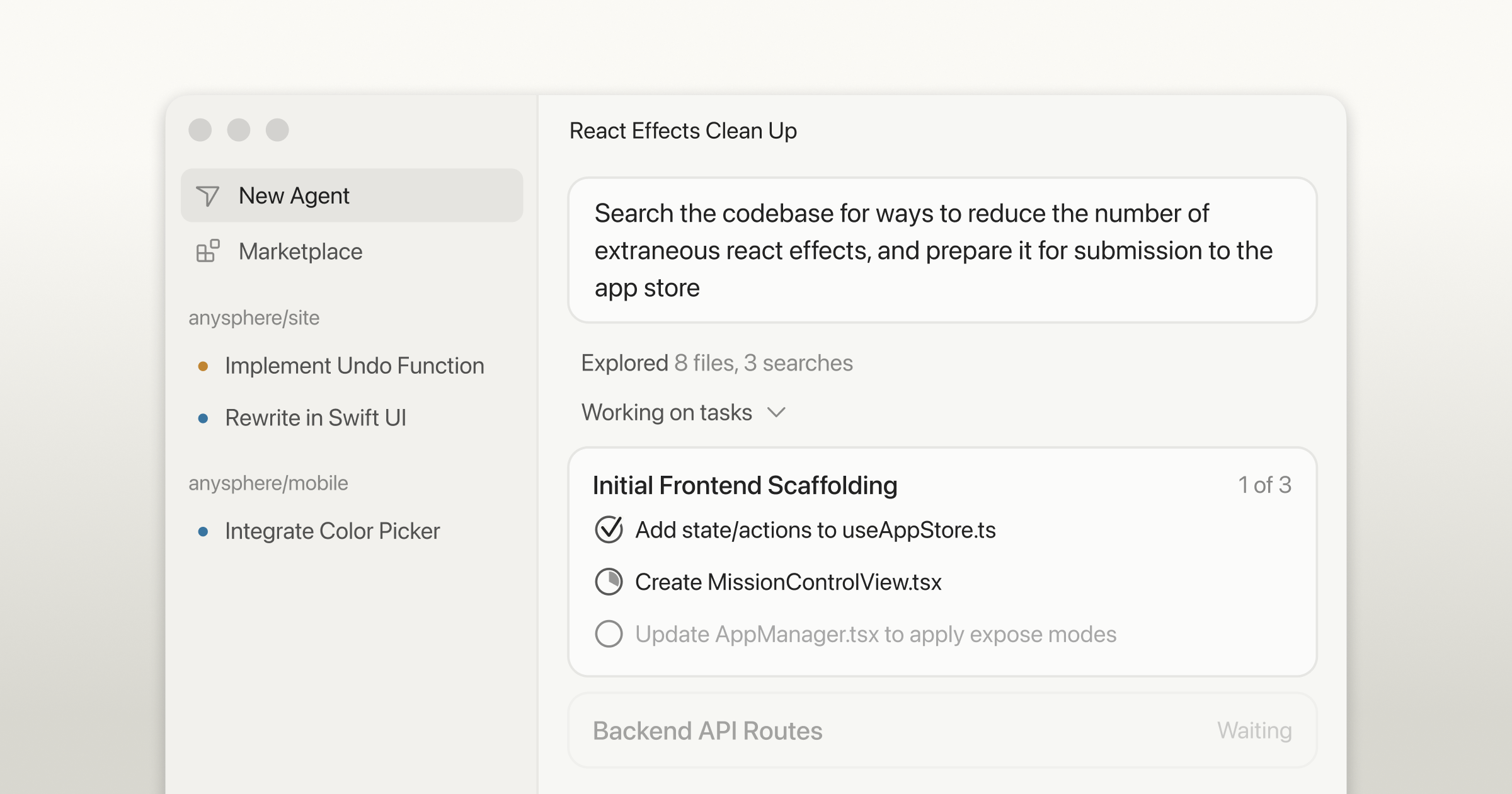

Cursor launched Cursor 3 — a unified workspace for building software with agents, bringing together the editor, cloud agents, and background automations into a single coherent product. The release represents a significant architectural shift from IDE-with-AI-features toward an agent-first development environment. The biggest Cursor product update to date.

H company released Holo3 — a new computer use model that claims state-of-the-art performance on browser and desktop automation benchmarks. The post covers architecture decisions and benchmark results showing Holo3 outperforming existing computer use models on key tasks. A significant new entrant in the fast-moving computer use agent space.