GPT-5.5 Instant: smarter, clearer, and more personalized

OpenAI releases GPT-5.5 Instant as the new default model for ChatGPT. The update brings improved accuracy, reduced hallucinations, and more granular personalization controls for users.

The latest from the AI and MCP ecosystem, curated daily.

Sources

OpenAI releases GPT-5.5 Instant as the new default model for ChatGPT. The update brings improved accuracy, reduced hallucinations, and more granular personalization controls for users.

OpenAI has released MRC (Multipath Reliable Connection) via the Open Compute Project. This new networking protocol enhances resilience and performance for massive AI training clusters by improving how data is routed across supercomputer networks.

Anthropic provides a practical adoption roadmap and customer examples for integrating Claude into financial services. The guide focuses on compressing time-consuming workflows and improving operational efficiency.

Google introduces event-driven webhooks for the Gemini API, allowing developers to move from inefficient polling to a push-based notification system. This significantly reduces latency and friction for managing long-running AI jobs.

OpenAI details the architectural overhaul of its WebRTC stack to support real-time Voice AI. The new system focuses on low latency and seamless conversational turn-taking at a global scale.

A case study on how Claude Code enables non-technical users to build and ship functional apps rapidly. It highlights the shift toward AI-driven development where prompt-based orchestration replaces manual coding.

Cursor explores the iterative process of improving agent harnesses through context optimization, rigorous evaluation, and model-specific tuning to make AI agents more reliable.

Anthropic provides a framework for deploying enterprise-grade agents and introduces Claude Cowork to bring collaborative agent capabilities to professional teams.

.jpg)

Claude Security allows developers to scan code for vulnerabilities and generate fixes using Opus 4.7, available via the Claude Platform and partner integrations.

Detailed technical insights into optimizing Claude Code using prompt caching to reduce latency and cost. Covers effective prompt structuring, tool usage, and compaction strategies for agentic workflows.

OpenAI explores the underlying causes and fixes for personality-driven quirks in GPT-5. This research provides insight into model behavior alignment and the technical challenges of scaling next-gen LLMs.

An analysis of how personality-driven quirks and 'goblin outputs' emerged in GPT-5 behavior. It details the timeline, root causes, and the fixes implemented to stabilize model personality.

Evaluating AI models is becoming a critical compute bottleneck as complexity increases. This shift highlights the need for more efficient evaluation frameworks to prevent a slowdown in model iteration.

Hugging Face integrates DeepInfra as an inference provider, expanding the accessibility of high-performance model hosting. This move strengthens the open-source AI ecosystem by lowering the barrier to deploying large-scale models.

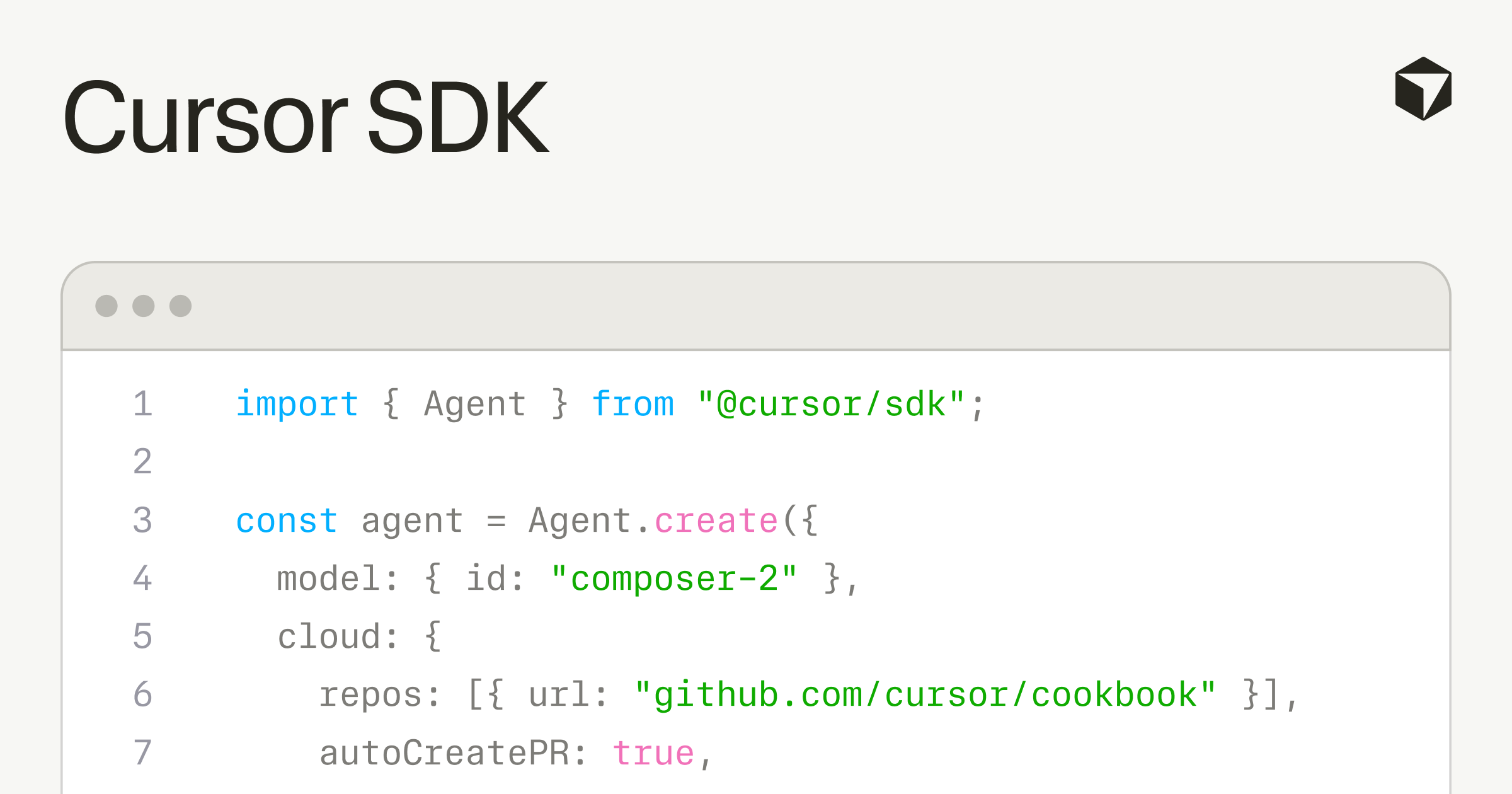

The new Cursor SDK enables developers to launch, steer, and compose custom agents, expanding the ability to build agentic workflows directly into the editor ecosystem.

.jpg)

Anthropic released a comprehensive guide on deploying Claude Cowork for enterprise environments. It details practical use cases and best practices for integrating AI assistants into organizational workflows to improve day-to-day productivity.

The claude-api skill is now integrated into CodeRabbit, JetBrains, Resolve AI, and Warp, enabling production-ready Claude API capabilities directly within these developer tools.

.jpg)

Analysis of how managed agents change the product development lifecycle. Focuses on using agentic tools to automate routine tasks and unblock high-level creative work.

NVIDIA releases Nemotron 3 Nano Omni, a multimodal model designed for long-context processing of audio, video, and documents. This enables more capable agents for complex multimodal analysis and retrieval.

OpenAI GPT models, Codex, and Managed Agents are now available on AWS, providing enterprises with a secure environment to deploy AI agents on their own infrastructure.